This is not a step by step guide to upgrading from 11.1.0.7 to 11.2.0.4. There's an earlier post which shows upgrading from 11.1.0.7 to 11.2.0.3 and this post is a follow up to that highlighting mainly the differences. For oracle documentation and useful metalink notes refer the previous post. The 11.1 environment used is a clone of the one used for the previous post.

First difference encountered during the upgrade to 11.2.0.4 is on the cluvfy

Apart from cluvfy, raccheck could also be used to evaluate the upgrade readiness.

Create additional user groups for ASM administration (refer the previous post) and begin the clusterware upgrade. It is possible to upgrade ASM after the clusterware upgrade but in this case ASM is upgraded at the same time as the clusterware. This is a out-of-place rolling upgrade. The clusterware stack will be up until rootupgrade.sh is run. Versions before the upgrade

Check and change auto start status of certain resources so they are always brought up

During the database shutdown (and before DB was upgraded to 11.2 version) following was seen during the cluster resrouce stop.

Post clusterware installation could be checked with cluvyf and raccheck

The database software upgrade is an out-of-place upgrade. Though it is possible for database software, in-place upgrades are not recommended. Unlike the previous post in this upgrade database software is installed first and then database is upgrade. Check the database installation readiness with

Give an different location to current ORACLE_HOME to proceed with the out-of-place upgrade.

Once the database software is installed next step is to upgrade the database. Copy utlu112i.sql from the 11.2 ORACLE_HOME/rdbms/admin to a location outside ORACLE_HOME and run. The pre-upgrade configuration tool utlu112i.sql will list any work needed before the upgrade.

If there are large number of records in AUD$ and FGA_LOG$ tables, pre-processing these tables could speed up the database upgrade. More on 1329590.1

Large amount of files in $ORACLE_HOME/`hostname -s`_$ORACLE_SID/sysman/emd/upload location could also lengthen the upgrade time. Refer 870814.1 and 837570.1 for more information.

Upgrade summary before DBUA is executed

Upgrade summary after the upgrade

Since in 11.2 versions _external_scn_rejection_threshold_hours is set to 24 by default commenting of this parameter after the upgrade is not a problem.

Check the timezone values of the upgraded database.

Useful metalink notes

RACcheck Upgrade Readiness Assessment [ID 1457357.1]

Complete Checklist for Manual Upgrades to 11gR2 [ID 837570.1]

Complete Checklist to Upgrade the Database to 11gR2 using DBUA [ID 870814.1]

Pre 11.2 Database Issues in 11gR2 Grid Infrastructure Environment [ID 948456.1]

Things to Consider Before Upgrading to 11.2.0.3 Grid Infrastructure/ASM [ID 1363369.1]

Actions For DST Updates When Upgrading To Or Applying The 11.2.0.4 Patchset [ID 1579838.1]

How to Pre-Process SYS.AUD$ Records Pre-Upgrade From 10.1 or later to 11gR1 or later. [ID 1329590.1]

Things to Consider Before Upgrading to 11.2.0.3 to Avoid Poor Performance or Wrong Results [ID 1392633.1]

Related Posts

Upgrading from 10.2.0.4 to 10.2.0.5 (Clusterware, RAC, ASM)

Upgrade from 10.2.0.5 to 11.2.0.3 (Clusterware, RAC, ASM)

Upgrade from 11.1.0.7 to 11.2.0.3 (Clusterware, ASM & RAC)

Upgrading from 11.1.0.7 to 11.2.0.3 with Transient Logical Standby

Upgrading from 11.2.0.1 to 11.2.0.3 with in-place upgrade for RAC

In-place upgrade from 11.2.0.2 to 11.2.0.3

Upgrading from 11.2.0.2 to 11.2.0.3 with Physical Standby - 1

Upgrading from 11.2.0.2 to 11.2.0.3 with Physical Standby - 2

Upgrading from 11gR2 (11.2.0.3) to 12c (12.1.0.1) Grid Infrastructure

First difference encountered during the upgrade to 11.2.0.4 is on the cluvfy

./runcluvfy.sh stage -pre crsinst -upgrade -n rac1,rac2 -rolling -src_crshome /opt/crs/oracle/product/11.1.0/crs -dest_crshome /opt/app/11.2.0/grid -dest_version 11.2.0.4.0 -fixup -verboseRunning the cluvfy that came with the installation media flagged several per-requisites as failed. This seem to be an issue/bug on the 11.2.0.4 installation's cluvfy as the per-requisites that were flagged as failed were successful when evaluated with a 11.2.0.3 cluvfy and the checked values hasn't changed from 11.2.0.3 to 11.2.0.4. For the most part the failures were when evaluating the remote node. If the node running the cluvfy was changed (earlier remote node becomes the local node running the cluvfy) then per-requisites that were flagged as failed are now successful and same per-requisites are flagged as failed on the new remote node. In short the runcluvfy.sh that comes with the 11.2.0.4 installation media(in file p13390677_112040_Linux-x86-64_3of7.zip) is not useful in evaluating the per-requisites for upgrade are met. Following is the list of per-requisites that had issues, clvufy was run from node called rac1 (local node) and in this case rac2 is the remote node

Check: Free disk space for "rac2:/opt/app/11.2.0/grid,rac2:/tmp"cluvfy seem unable to get the space usage from the remote node. When cluvfy was run from rac2 space check on rac2 was passed and space check on rac1 would fail.

Path Node Name Mount point Available Required Status

---------------- ------------ ------------ ------------ ------------ ------------

/opt/app/11.2.0/grid rac2 UNKNOWN NOTAVAIL 7.5GB failed

/tmp rac2 UNKNOWN NOTAVAIL 7.5GB failed

Result: Free disk space check failed for "rac2:/opt/app/11.2.0/grid,rac2:/tmp"

Checking for Oracle patch "11724953" in home "/opt/crs/oracle/product/11.1.0/crs".Patch 11724953 (2011 April CRS PSU) is required to be present in the 11.1 environment before the upgrade to 11.2.0.4 and cluvfy is unable to verify this on the remote node. This could be manually checked with OPatch.

Node Name Applied Required Comment

------------ ------------------------ ------------------------ ----------

rac2 missing 11724953 failed

rac1 11724953 11724953 passed

Result: Check for Oracle patch "11724953" in home "/opt/crs/oracle/product/11.1.0/crs" failed

Check: TCP connectivity of subnet "192.168.0.0"Some of the node connectivity checks also fails. Oddly enough using cluvfy's own nodereach and nodecon checks pass.

Source Destination Connected?

------------------------------ ------------------------------ ----------------

rac1:192.168.0.85 rac2:192.168.0.85 failed

ERROR:

PRVF-7617 : Node connectivity between "rac1 : 192.168.0.85" and "rac2 : 192.168.0.85" failed

rac1:192.168.0.85 rac2:192.168.0.89 failed

ERROR:

PRVF-7617 : Node connectivity between "rac1 : 192.168.0.85" and "rac2 : 192.168.0.89" failed

rac1:192.168.0.85 rac1:192.168.0.89 failed

ERROR:

PRVF-7617 : Node connectivity between "rac1 : 192.168.0.85" and "rac1 : 192.168.0.89" failed

Result: TCP connectivity check failed for subnet "192.168.0.0"

ERROR:Even though cvuqdisk-1.0.9-1.rpm is installed sharedness check for vote disk fails on the remote node.

PRVF-5449 : Check of Voting Disk location "/dev/sdb2(/dev/sdb2)" failed on the following nodes:

rac2

rac2:GetFileInfo command failed.

PRVF-5431 : Oracle Cluster Voting Disk configuration check failed

Apart from cluvfy, raccheck could also be used to evaluate the upgrade readiness.

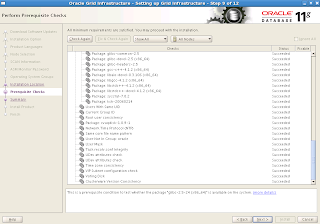

raccheck -u -o preEven though cluvfy fails to evaluate certain per-requisites OUI is able to evaluate all without any issue. Below is the output from the OUI

Create additional user groups for ASM administration (refer the previous post) and begin the clusterware upgrade. It is possible to upgrade ASM after the clusterware upgrade but in this case ASM is upgraded at the same time as the clusterware. This is a out-of-place rolling upgrade. The clusterware stack will be up until rootupgrade.sh is run. Versions before the upgrade

[oracle@rac1 ~]$ crsctl query crs activeversionSummary page Rootupgrade execution output from rac1 node

Oracle Clusterware active version on the cluster is [11.1.0.7.0]

[oracle@rac1 ~]$ crsctl query crs softwareversion rac1

Oracle Clusterware version on node [rac1] is [11.1.0.7.0]

[oracle@rac1 ~]$ crsctl query crs softwareversion rac2

Oracle Clusterware version on node [rac2] is [11.1.0.7.0]

[oracle@rac1 ~]$ crsctl query crs releaseversion

11.1.0.7.0

[root@rac1 ~]# /opt/app/11.2.0/grid/rootupgrade.shThis will update the software version but active version will remain the lower version of 11.1 until all nodes are upgraded.

Performing root user operation for Oracle 11g

The following environment variables are set as:

ORACLE_OWNER= oracle

ORACLE_HOME= /opt/app/11.2.0/grid

Enter the full pathname of the local bin directory: [/usr/local/bin]:

The contents of "dbhome" have not changed. No need to overwrite.

The file "oraenv" already exists in /usr/local/bin. Overwrite it? (y/n)

[n]: y

Copying oraenv to /usr/local/bin ...

The file "coraenv" already exists in /usr/local/bin. Overwrite it? (y/n)

[n]: y

Copying coraenv to /usr/local/bin ...

Entries will be added to the /etc/oratab file as needed by

Database Configuration Assistant when a database is created

Finished running generic part of root script.

Now product-specific root actions will be performed.

Using configuration parameter file: /opt/app/11.2.0/grid/crs/install/crsconfig_params

Creating trace directory

Installing Trace File Analyzer

OLR initialization - successful

root wallet

root wallet cert

root cert export

peer wallet

profile reader wallet

pa wallet

peer wallet keys

pa wallet keys

peer cert request

pa cert request

peer cert

pa cert

peer root cert TP

profile reader root cert TP

pa root cert TP

peer pa cert TP

pa peer cert TP

profile reader pa cert TP

profile reader peer cert TP

peer user cert

pa user cert

Replacing Clusterware entries in inittab

clscfg: EXISTING configuration version 4 detected.

clscfg: version 4 is 11 Release 1.

Successfully accumulated necessary OCR keys.

Creating OCR keys for user 'root', privgrp 'root'..

Operation successful.

Configure Oracle Grid Infrastructure for a Cluster ... succeeded

[oracle@rac1 ~]$ crsctl query crs activeversionRootupgrade output from node rac2 (last node)

Oracle Clusterware active version on the cluster is [11.1.0.7.0]

[oracle@rac1 ~]$ crsctl query crs softwareversion

Oracle Clusterware version on node [rac1] is [11.2.0.4.0]

[root@rac2 ~]# /opt/app/11.2.0/grid/rootupgrade.shActive version is updated to 11.2.0.4

Performing root user operation for Oracle 11g

The following environment variables are set as:

ORACLE_OWNER= oracle

ORACLE_HOME= /opt/app/11.2.0/grid

Enter the full pathname of the local bin directory: [/usr/local/bin]:

The contents of "dbhome" have not changed. No need to overwrite.

The file "oraenv" already exists in /usr/local/bin. Overwrite it? (y/n)

[n]: y

Copying oraenv to /usr/local/bin ...

The file "coraenv" already exists in /usr/local/bin. Overwrite it? (y/n)

[n]: y

Copying coraenv to /usr/local/bin ...

Entries will be added to the /etc/oratab file as needed by

Database Configuration Assistant when a database is created

Finished running generic part of root script.

Now product-specific root actions will be performed.

Using configuration parameter file: /opt/app/11.2.0/grid/crs/install/crsconfig_params

Creating trace directory

Installing Trace File Analyzer

OLR initialization - successful

Replacing Clusterware entries in inittab

clscfg: EXISTING configuration version 5 detected.

clscfg: version 5 is 11g Release 2.

Successfully accumulated necessary OCR keys.

Creating OCR keys for user 'root', privgrp 'root'..

Operation successful.

Start upgrade invoked..

Started to upgrade the Oracle Clusterware. This operation may take a few minutes.

Started to upgrade the OCR.

Started to upgrade the CSS.

Started to upgrade the CRS.

The CRS was successfully upgraded.

Successfully upgraded the Oracle Clusterware.

Oracle Clusterware operating version was successfully set to 11.2.0.4.0

Configure Oracle Grid Infrastructure for a Cluster ... succeeded

[oracle@rac2 ~]$ crsctl query crs activeversionOnce the OK button on "Execute Configuration Script" dialog is clicked it will start the execution of set of configuration assistants and ASMCA among them. ASM upgrade is done in a rolling fashion and following could be seen on the ASM alert log

Oracle Clusterware active version on the cluster is [11.2.0.4.0]

Tue Oct 15 12:52:37 2013Once the configuration assistants are run clusterware upgrade is complete. It must also be noted that the upgraded environment had OCR and Vote disk using block devices

ALTER SYSTEM START ROLLING MIGRATION TO 11.2.0.4.0

[oracle@rac1 ~]$ crsctl query css votediskIf it's planned to upgrade to 12c (from 11.2) these must be moved to an ASM diskgroup.

## STATE File Universal Id File Name Disk group

-- ----- ----------------- --------- ---------

1. ONLINE 9687be081c784f98bf9166d561d875b0 (/dev/sdb2) []

Located 1 voting disk(s).

Check and change auto start status of certain resources so they are always brought up

>'BEGIN {printf "%-35s %-25s %-18s\n", "Resource Name", "Type", "Auto Start State";

> printf "%-35s %-25s %-18s\n", "-----------", "------", "----------------";}'

Resource Name Type Auto Start State

----------- ------ ----------------

[oracle@rac1 ~]$ crsctl stat res -p | egrep -w "NAME|TYPE|AUTO_START" | grep -v DEFAULT_TEMPLATE | awk \

>'BEGIN { FS="="; state = 0; }

> $1~/NAME/ {appname = $2; state=1};

> state == 0 {next;}

> $1~/TYPE/ && state == 1 {apptarget = $2; state=2;}

> $1~/AUTO_START/ && state == 2 {appstate = $2; state=3;}

> state == 3 {printf "%-35s %-25s %-18s\n", appname, apptarget, appstate; state=0;}'

ora.DATA.dg ora.diskgroup.type never

ora.FLASH.dg ora.diskgroup.type never

ora.LISTENER.lsnr ora.listener.type restore

ora.LISTENER_SCAN1.lsnr ora.scan_listener.type restore

ora.asm ora.asm.type never

ora.cvu ora.cvu.type restore

ora.gsd ora.gsd.type always

ora.net1.network ora.network.type restore

ora.oc4j ora.oc4j.type restore

ora.ons ora.ons.type always

ora.rac1.vip ora.cluster_vip_net1.type restore

ora.rac11g1.db application 1

ora.rac11g1.rac11g11.inst application 1

ora.rac11g1.rac11g12.inst application 1

ora.rac11g1.bx.cs application 1

ora.rac11g1.bx.rac11g11.srv application restore

ora.rac11g1.bx.rac11g12.srv application restore

ora.rac2.vip ora.cluster_vip_net1.type restore

ora.registry.acfs ora.registry.acfs.type restore

ora.scan1.vip ora.scan_vip.type restoreRemove the old 11.1 clusterware installation.During the database shutdown (and before DB was upgraded to 11.2 version) following was seen during the cluster resrouce stop.

CRS-5809: Failed to execute 'ACTION_SCRIPT' value of '/opt/crs/oracle/product/11.1.0/crs/bin/racgwrap' for 'ora.rac11g1.db'. Error information 'cmd /opt/crs/oracle/product/11.1.0/crs/bin/racgwrap not found', Category : -2, OS error : 2This was due to action_script attribute not being updated to reflect the new clusterware location. To fix this start the clusterware stack and run the following

CRS-2678: 'ora.rac11g1.db' on 'rac1' has experienced an unrecoverable failure

CRS-0267: Human intervention required to resume its availability.

[root@rac1 oracle]# crsctl modify resource ora.rac11g1.db -attr "ACTION_SCRIPT=/opt/app/11.2.0/grid/bin/racgwrap""/opt/app/11.2.0/grid" is the new clusterware home. More on this is available on Pre 11.2 Database Issues in 11gR2 Grid Infrastructure Environment [948456.1]

Post clusterware installation could be checked with cluvyf and raccheck

cluvfy stage -post crsinst -n rac1,rac2This conclude the upgrade of clusteware and ASM. Next step is the upgrade of database.

./raccheck -u -o post

The database software upgrade is an out-of-place upgrade. Though it is possible for database software, in-place upgrades are not recommended. Unlike the previous post in this upgrade database software is installed first and then database is upgrade. Check the database installation readiness with

cluvfy stage -pre dbinst -n rac1,rac2 -verbose -fixupUnset ORACLE_HOME varaible and run the installation.

Give an different location to current ORACLE_HOME to proceed with the out-of-place upgrade.

Once the database software is installed next step is to upgrade the database. Copy utlu112i.sql from the 11.2 ORACLE_HOME/rdbms/admin to a location outside ORACLE_HOME and run. The pre-upgrade configuration tool utlu112i.sql will list any work needed before the upgrade.

SQL> @utlu112i.sqlFor 11.1.0.7 to 11.2.0.4 upgrades if the database timezone is less than 14 there's no additional patches needed before the upgrade. But it's recommended to upgrade the database to 11.2.0.4 timezone once the upgrade is done. Timezone upgrade could also be done at the same time as database upgrade using DBUA. More on 1562142.1

SQL> SET SERVEROUTPUT ON FORMAT WRAPPED;

SQL> -- Linesize 100 for 'i' version 1000 for 'x' version

SQL> SET ECHO OFF FEEDBACK OFF PAGESIZE 0 LINESIZE 100;

Oracle Database 11.2 Pre-Upgrade Information Tool 10-15-2013 15:22:44

Script Version: 11.2.0.4.0 Build: 001

.

**********************************************************************

Database:

**********************************************************************

--> name: RAC11G1

--> version: 11.1.0.7.0

--> compatible: 11.1.0.0.0

--> blocksize: 8192

--> platform: Linux x86 64-bit

--> timezone file: V4

.

**********************************************************************

Tablespaces: [make adjustments in the current environment]

**********************************************************************

--> SYSTEM tablespace is adequate for the upgrade.

.... minimum required size: 1100 MB

--> SYSAUX tablespace is adequate for the upgrade.

.... minimum required size: 1445 MB

--> UNDOTBS1 tablespace is adequate for the upgrade.

.... minimum required size: 400 MB

--> TEMP tablespace is adequate for the upgrade.

.... minimum required size: 60 MB

.

**********************************************************************

Flashback: OFF

**********************************************************************

**********************************************************************

Update Parameters: [Update Oracle Database 11.2 init.ora or spfile]

Note: Pre-upgrade tool was run on a lower version 64-bit database.

**********************************************************************

--> If Target Oracle is 32-Bit, refer here for Update Parameters:

-- No update parameter changes are required.

.

--> If Target Oracle is 64-Bit, refer here for Update Parameters:

-- No update parameter changes are required.

.

**********************************************************************

Renamed Parameters: [Update Oracle Database 11.2 init.ora or spfile]

**********************************************************************

-- No renamed parameters found. No changes are required.

.

**********************************************************************

Obsolete/Deprecated Parameters: [Update Oracle Database 11.2 init.ora or spfile]

**********************************************************************

-- No obsolete parameters found. No changes are required

.

**********************************************************************

Components: [The following database components will be upgraded or installed]

**********************************************************************

--> Oracle Catalog Views [upgrade] VALID

--> Oracle Packages and Types [upgrade] VALID

--> JServer JAVA Virtual Machine [upgrade] VALID

--> Oracle XDK for Java [upgrade] VALID

--> Real Application Clusters [upgrade] VALID

--> Oracle Workspace Manager [upgrade] VALID

--> OLAP Analytic Workspace [upgrade] VALID

--> OLAP Catalog [upgrade] VALID

--> EM Repository [upgrade] VALID

--> Oracle Text [upgrade] VALID

--> Oracle XML Database [upgrade] VALID

--> Oracle Java Packages [upgrade] VALID

--> Oracle interMedia [upgrade] VALID

--> Spatial [upgrade] VALID

--> Oracle Ultra Search [upgrade] VALID

--> Expression Filter [upgrade] VALID

--> Rule Manager [upgrade] VALID

--> Oracle Application Express [upgrade] VALID

... APEX will only be upgraded if the version of APEX in

... the target Oracle home is higher than the current one.

--> Oracle OLAP API [upgrade] VALID

.

**********************************************************************

Miscellaneous Warnings

**********************************************************************

WARNING: --> The "cluster_database" parameter is currently "TRUE"

.... and must be set to "FALSE" prior to running a manual upgrade.

WARNING: --> Database is using a timezone file older than version 14.

.... After the release migration, it is recommended that DBMS_DST package

.... be used to upgrade the 11.1.0.7.0 database timezone version

.... to the latest version which comes with the new release.

WARNING: --> Database contains INVALID objects prior to upgrade.

.... The list of invalid SYS/SYSTEM objects was written to

.... registry$sys_inv_objs.

.... The list of non-SYS/SYSTEM objects was written to

.... registry$nonsys_inv_objs.

.... Use utluiobj.sql after the upgrade to identify any new invalid

.... objects due to the upgrade.

.... USER ASANGA has 1 INVALID objects.

WARNING: --> EM Database Control Repository exists in the database.

.... Direct downgrade of EM Database Control is not supported. Refer to the

.... Upgrade Guide for instructions to save the EM data prior to upgrade.

WARNING: --> Ultra Search is not supported in 11.2 and must be removed

.... prior to upgrading by running rdbms/admin/wkremov.sql.

.... If you need to preserve Ultra Search data

.... please perform a manual cold backup prior to upgrade.

WARNING: --> Your recycle bin contains 4 object(s).

.... It is REQUIRED that the recycle bin is empty prior to upgrading

.... your database. The command:

PURGE DBA_RECYCLEBIN

.... must be executed immediately prior to executing your upgrade.

WARNING: --> Database contains schemas with objects dependent on DBMS_LDAP package.

.... Refer to the 11g Upgrade Guide for instructions to configure Network ACLs.

.... USER WKSYS has dependent objects.

.... USER FLOWS_030000 has dependent objects.

.

**********************************************************************

Recommendations

**********************************************************************

Oracle recommends gathering dictionary statistics prior to

upgrading the database.

To gather dictionary statistics execute the following command

while connected as SYSDBA:

EXECUTE dbms_stats.gather_dictionary_stats;

**********************************************************************

Oracle recommends removing all hidden parameters prior to upgrading.

To view existing hidden parameters execute the following command

while connected AS SYSDBA:

SELECT name,description from SYS.V$PARAMETER WHERE name

LIKE '\_%' ESCAPE '\'

Changes will need to be made in the init.ora or spfile.

**********************************************************************

Oracle recommends reviewing any defined events prior to upgrading.

To view existing non-default events execute the following commands

while connected AS SYSDBA:

Events:

SELECT (translate(value,chr(13)||chr(10),'')) FROM sys.v$parameter2

WHERE UPPER(name) ='EVENT' AND isdefault='FALSE'

Trace Events:

SELECT (translate(value,chr(13)||chr(10),'')) from sys.v$parameter2

WHERE UPPER(name) = '_TRACE_EVENTS' AND isdefault='FALSE'

Changes will need to be made in the init.ora or spfile.

**********************************************************************

Elapsed: 00:00:01.26

If there are large number of records in AUD$ and FGA_LOG$ tables, pre-processing these tables could speed up the database upgrade. More on 1329590.1

Large amount of files in $ORACLE_HOME/`hostname -s`_$ORACLE_SID/sysman/emd/upload location could also lengthen the upgrade time. Refer 870814.1 and 837570.1 for more information.

Upgrade summary before DBUA is executed

Upgrade summary after the upgrade

Since in 11.2 versions _external_scn_rejection_threshold_hours is set to 24 by default commenting of this parameter after the upgrade is not a problem.

Check the timezone values of the upgraded database.

SQL> SELECT PROPERTY_NAME, SUBSTR(property_value, 1, 30) valueIf timezone_file value and value shown in database registry differ then registry could be updated as per 1509653.1

2 FROM DATABASE_PROPERTIES

3 WHERE PROPERTY_NAME LIKE 'DST_%'

4 ORDER BY PROPERTY_NAME;

PROPERTY_NAME VALUE

------------------------------ ----------

DST_PRIMARY_TT_VERSION 14

DST_SECONDARY_TT_VERSION 0

DST_UPGRADE_STATE NONE

SQL> SELECT VERSION FROM v$timezone_file;

VERSION

----------

14

SQL> select TZ_VERSION from registry$database;

TZ_VERSION

----------

4

SQL> update registry$database set TZ_VERSION = (select version FROM v$timezone_file);Remote listener parameter will contain both scan VIP and pre-11.2 value. This could be reset to have only thee SCAN IPs.

1 row updated.

SQL> commit;

Commit complete.

SQL> select TZ_VERSION from registry$database;

TZ_VERSION

----------

14

SQL> select name,value from v$parameter where name='remote_listener';Finally update the compatible parameter to 11.2.0.4 once the upgrade is deemed satisfactory. This concludes the upgrade from 11.1.0.7 to 11.2.0.4

NAME VALUE

--------------- ----------------------------------------

remote_listener LISTENERS_RAC11G1, rac-scan-vip:1521

Useful metalink notes

RACcheck Upgrade Readiness Assessment [ID 1457357.1]

Complete Checklist for Manual Upgrades to 11gR2 [ID 837570.1]

Complete Checklist to Upgrade the Database to 11gR2 using DBUA [ID 870814.1]

Pre 11.2 Database Issues in 11gR2 Grid Infrastructure Environment [ID 948456.1]

Things to Consider Before Upgrading to 11.2.0.3 Grid Infrastructure/ASM [ID 1363369.1]

Actions For DST Updates When Upgrading To Or Applying The 11.2.0.4 Patchset [ID 1579838.1]

How to Pre-Process SYS.AUD$ Records Pre-Upgrade From 10.1 or later to 11gR1 or later. [ID 1329590.1]

Things to Consider Before Upgrading to 11.2.0.3 to Avoid Poor Performance or Wrong Results [ID 1392633.1]

Related Posts

Upgrading from 10.2.0.4 to 10.2.0.5 (Clusterware, RAC, ASM)

Upgrade from 10.2.0.5 to 11.2.0.3 (Clusterware, RAC, ASM)

Upgrade from 11.1.0.7 to 11.2.0.3 (Clusterware, ASM & RAC)

Upgrading from 11.1.0.7 to 11.2.0.3 with Transient Logical Standby

Upgrading from 11.2.0.1 to 11.2.0.3 with in-place upgrade for RAC

In-place upgrade from 11.2.0.2 to 11.2.0.3

Upgrading from 11.2.0.2 to 11.2.0.3 with Physical Standby - 1

Upgrading from 11.2.0.2 to 11.2.0.3 with Physical Standby - 2

Upgrading from 11gR2 (11.2.0.3) to 12c (12.1.0.1) Grid Infrastructure